Introduction

34.8% of enterprise data shared with AI tools is classified as sensitive — including personal data, financial records, and intellectual property — according to Cyberhaven's 2025 AI Adoption & Risk Report. Zscaler ThreatLabz documented over 4.2 million data loss prevention policy violations to AI applications in 2024 alone, each one a case where sensitive organizational data passed through a chat interface it shouldn't have.

This isn't a problem that lives in IT. HR leaders and internal communications managers who deploy or authorize chat tools own direct responsibility for what employees share, who can access it, and whether the organization faces regulatory penalties.

When an employee pastes compensation data into ChatGPT — or an AI assistant surfaces a confidential HR document to an unauthorized user — those teams bear the consequences of enabling tools without proper governance. This guide covers the specific risks, the governance frameworks that contain them, and the practical steps your organization can take now.

TL;DR

- Enterprise chat tools amplify existing data governance gaps, with most breaches traced to employee behavior rather than platform vulnerabilities

- Top threats: data leakage, prompt injection, shadow AI, credential theft, and regulatory violations

- Organizations that combine clear policy, access controls, and ongoing training consistently outperform those relying on platform defaults alone

- Purpose-built, admin-governed platforms significantly reduce attack surface versus fragmented consumer tools

Why Enterprise Chat Security Risks Deserve Serious Attention

Enterprise AI chat adoption has exploded between 2024 and 2025. Cyberhaven's Q2 2025 report reveals that AI usage frequency increased 4.6 times in 12 months and 61 times over 24 months. OpenAI reported serving over 7 million ChatGPT workplace seats with approximately 8x growth in weekly enterprise messages since November 2024. That pace of adoption means sensitive data is flowing through AI chat interfaces faster than most security policies can keep up.

HR and communications teams face particularly elevated exposure. Cyberhaven found that HR and employee records constituted 4.8% of all sensitive data input into AI tools in Q2 2025, up from 3.9% the previous year.

Employees routinely paste performance review notes, compensation spreadsheets, candidate PII (personally identifiable information), and confidential strategy documents into chat interfaces without recognizing the risk. When this data flows to non-corporate "shadow" accounts — as it frequently does — it bypasses enterprise security controls entirely and may be used for model training.

Closing these gaps requires attention across every layer of your security posture:

- Policy development — defining what data employees are permitted to share with AI tools

- Employee behavior — training staff to recognize exposure before they paste sensitive content

- Technical controls — enforcing data loss prevention, SSO, and approved platform lists

- Platform architecture — choosing enterprise-grade tools with private data handling by default

A gap in any one of these layers is enough for sensitive data to slip through.

The Most Critical Enterprise Chat Security Risks

Enterprise chat security threats fall into two categories: risks created by the data employees bring into tools, and risks inherent to the platforms and their configurations.

Data Leakage and Oversharing

The most common and damaging risk is employees pasting sensitive information directly into AI chat interfaces. The April 2023 Samsung incident provides a cautionary example: engineers in the semiconductor division inadvertently pasted proprietary source code into ChatGPT for debugging assistance, along with confidential internal meeting notes. Once entered, this data was transmitted to external servers beyond the company's control, prompting Samsung to implement a temporary ban on generative AI tools across all company devices.

Cyberhaven's research quantifies the scale: in March 2024, 27.4% of corporate data input into AI tools was sensitive, rising from just 10.7% a year prior. By 2025, that figure reached 34.8%, and 83.8% of enterprise data directed to AI tools flows to applications classified as medium, high, or critical risk.

RAG (Retrieval-Augmented Generation) compounds this exposure. When enterprise AI connects to internal document repositories, it can surface restricted files—salary spreadsheets, draft termination letters, confidential legal memos—to any employee who asks the right question.

This happens because legacy permission settings were never cleaned up, and the AI doesn't distinguish between "technically accessible" and "appropriately accessible."

Shadow AI and Unsanctioned Tool Integrations

Shadow AI refers to employees installing unvetted AI plugins, browser extensions, or note-taking bots into collaboration tools without IT approval. These applications often request broad read-access to private Slack channels, Zoom meetings, or Teams conversations where HR, legal, or financial discussions occur.

The threat is real and growing. In a campaign between December 2024 and February 2025, threat actors compromised developer accounts to push malicious updates through the official Chrome Web Store. GitLab identified at least 16 malicious Chrome extensions impacting at least 3.2 million users, designed to exfiltrate cookies, session tokens, and sensitive data. 73.8% of ChatGPT usage in work contexts occurs through personal, non-corporate accounts, according to Cyberhaven's Q2 2024 report, representing massive shadow AI adoption that bypasses all corporate controls.

Prompt Injection and AI Manipulation

For organizations running internal AI assistants connected to company knowledge bases, prompt injection is one of the most underappreciated attack surfaces. Malicious — or simply careless — inputs can override safety guardrails and extract data the system should never surface.

OWASP distinguishes two main variants:

- Direct injection: A user crafts inputs that manipulate the AI's behavior in real time

- Indirect injection: Malicious instructions are embedded in external documents or emails the AI processes — posing the greatest enterprise risk because it requires no direct attacker access

A 2025 Usenix Security paper documented the "PoisonedRAG" attack: attackers inject maliciously crafted text snippets into knowledge bases (by editing public documents the RAG system ingests). When users ask related questions, the RAG retrieves the poisoned text containing hidden instructions, achieving a 90% success rate in manipulating AI responses.

Even well-intentioned employees can trigger unintended outputs through poorly framed prompts, and the risk compounds when underlying data environments are over-permissioned.

Credential Theft and Unauthorized Account Access

Enterprise chat accounts rarely get breached directly — attackers go through the endpoint instead. Infostealer malware harvests login credentials quietly, then gives bad actors complete access to an employee's chat history and all previously shared sensitive data.

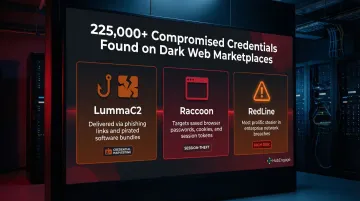

Group-IB discovered more than 225,000 compromised OpenAI/ChatGPT credentials for sale on dark web marketplaces between January and October 2023. The primary culprits were three malware families:

- LummaC2 — a credential-harvesting stealer widely distributed via phishing and cracked software

- Raccoon — known for targeting browser-stored passwords and session tokens

- RedLine — one of the most prolific infostealers in enterprise breach incidents

These stolen credentials give attackers authenticated access to organizational conversations, bypassing perimeter security entirely.

Regulatory and Compliance Exposure

Using consumer-grade or improperly configured enterprise chat tools to process employee or customer data can trigger violations of GDPR, HIPAA, and emerging frameworks like the EU AI Act.

Under GDPR, violations of basic processing principles and data subjects' rights carry fines up to €20 million or 4% of total worldwide annual turnover, whichever is higher. The Italian Data Protection Authority's 2023 enforcement action against ChatGPT—resulting in a temporary service suspension—set a precedent for how regulators will scrutinize LLM chat services handling EU personal data.

HR teams face the sharpest exposure. The data they routinely handle touches multiple regulatory frameworks simultaneously:

- Employee health information (HIPAA, ADA)

- Compensation and performance records (GDPR, state privacy laws)

- Disciplinary documentation (litigation hold obligations)

- Any data involving EU residents (EU AI Act, GDPR Article 22)

Enterprise Chat Security Best Practices

Effective security requires layering policy, technical controls, and human behavior modification. No single measure suffices.

Establish a Formal Chat Usage Policy

Organizations must define what types of data employees are prohibited from entering into AI chat tools:

- Personally identifiable information (PII)

- Compensation and salary data

- Unreleased financial information

- Customer records and proprietary data

- Source code and intellectual property

- Confidential legal or HR documents

Role-specific guidelines should reflect the actual data different departments handle. A cross-functional governance group—including HR, IT, legal, and compliance—should own and update this policy as tools evolve.

Platforms like HubEngage provide a governed environment for employee communications, where content permissions and access are centrally managed by admins. This reduces reliance on unvetted consumer tools by consolidating communications onto a purpose-built platform certified to ISO 27001 and SOC standards, with built-in GDPR and HIPAA compliance.

Implement Zero-Trust Access Controls

Zero-trust means every user, device, and application must be verified before accessing enterprise chat systems:

- Enforce multi-factor authentication (MFA) on all accounts

- Limit which employees can access which channels or AI features

- Audit permission settings regularly rather than assuming legacy access levels remain appropriate

- Configure stricter session timeout policies for SaaS and AI applications

- Implement permission-aware access controls on document stores and vector databases feeding RAG systems

These access controls must be paired with updated DLP tooling — traditional file-based approaches simply can't keep up. Cyberhaven found that 98% of data entered into AI tools is pasted directly into interfaces, not uploaded as files. Legacy DLP tools lack the browser-level visibility to inspect pasted content in web forms, leaving the vast majority of potential exfiltration undetected.

Train Employees on Data Hygiene Before the Prompt

The most effective intervention happens before an employee types anything. Regular training should cover:

- How to recognize sensitive data types

- How to anonymize or paraphrase real data before using AI tools

- What the consequences of violations are

- How to identify and use approved tools

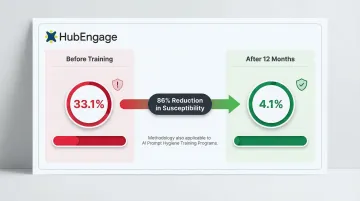

The data behind training programs like these is compelling. KnowBe4's 2025 Phishing By Industry Benchmarking Report found that ongoing security awareness training reduced employee susceptibility to phishing attacks from 33.1% to just 4.1% after 12 months — an 86% reduction. The same behavioral shift applies to AI prompt hygiene: when employees internalize what "sensitive data" looks like, they stop sharing it regardless of the tool in front of them.

Deploy Content Filtering and Data Loss Prevention (DLP) Controls

AI-aware DLP solutions can flag or block sensitive content before it leaves the organization's environment. These controls must be configured specifically for chat and AI workflows, not just email or file transfers.

Key capabilities include:

- Inspecting content being pasted into prompts

- Monitoring API traffic to AI services

- Detecting PII, credentials, and financial data in real-time

- Blocking transmissions that violate policy

Monitor, Audit, and Maintain an Incident Response Plan

Organizations that contain breaches quickly share one trait: they were already watching. Behavioral signals worth monitoring include:

- Mass data downloads or bulk prompt activity

- Off-hours access to AI tools or sensitive channels

- Unusual API call volumes to external AI services

- Repeated policy violations from the same user or team

Comprehensive logging of AI tool usage, integrated with your SIEM, enables near-real-time detection of anomalous activity. Pair this with a documented, AI-specific incident response plan — so when something triggers an alert, the team knows exactly what to do next.

Common Enterprise Chat Security Mistakes to Avoid

These mistakes show up across organizations of every size — and most are preventable with the right checks in place.

Assuming Enterprise Branding Equals Enterprise Security

Many tools marketed as "enterprise" still store data on shared infrastructure, lack granular admin controls, or have data retention policies that conflict with GDPR or HIPAA. Before deployment, verify:

- SOC 2 Type II compliance and the scope of what it actually covers

- Data residency options and where your data physically lives

- Contractual data-use terms, including whether vendor staff can access your messages

SOC 2 reports attest to historical control effectiveness during the audit period. They don't guarantee legal compliance, comprehensive coverage, protection from misconfiguration, or future performance.

Treating Security as a One-Time Setup

Organizations that configure access controls at deployment but never revisit them as headcount, roles, or tools change operate with hidden exposure. Stale permissions and orphaned accounts are two of the most reliable paths to a data incident in chat systems. Review policies quarterly — and tie access audits to employee offboarding and role changes, not just calendar cycles.

Ignoring the Human Layer

Relying entirely on technical controls while skipping employee education creates a false sense of security. Most enterprise chat breaches trace back to employee behavior — oversharing sensitive information, reusing weak passwords, or installing unauthorized plugins — not failures in the underlying platform architecture. Technical safeguards and user training have to work together.

Conclusion

Enterprise chat security is a shared responsibility between IT, HR, compliance, and every employee. The tools themselves rarely cause breaches; it's the combination of poor data hygiene, weak governance, and undertrained staff that creates real exposure.

HR and communications leaders should treat security as an ongoing operational discipline. That means:

- Reviewing policies quarterly as threats and tools evolve

- Auditing access controls on a regular schedule

- Training employees regularly — not just at onboarding

- Consolidating communications onto purpose-built, admin-governed platforms that reduce your attack surface

Platforms like HubEngage are built with this model in mind: centralized governance, role-based access, and multi-channel reach without the fragmentation that creates security gaps. When the infrastructure supports the policy, security stops being a remediation task and starts becoming a baseline standard.

Frequently Asked Questions

Can employers access ChatGPT Enterprise chat history?

Yes — admins can access usage analytics and, depending on configuration, conversation logs. Unlike the consumer product, Enterprise data is not used for model training by default, though exact retention and sharing terms vary by agreement.

What are the security risks of using ChatGPT Enterprise?

The main risks include employees sharing sensitive data in prompts, prompt injection vulnerabilities that manipulate AI behavior, credential theft via compromised endpoints, and potential regulatory exposure if data processing doesn't align with GDPR or HIPAA requirements.

What should I avoid telling ChatGPT Enterprise?

Never input PII, compensation data, unpublished financial information, proprietary source code, customer records, or anything classified as confidential under company policy. Even in Enterprise versions with stronger contractual protections, careful input practices still matter.

What is the difference between ChatGPT and ChatGPT Enterprise?

ChatGPT Enterprise offers enhanced admin controls, single sign-on, data encryption, and a contractual commitment that conversations won't be used to train OpenAI's models. However, it doesn't eliminate human behavior risks or broader data environment risks that exist upstream of the AI itself.

How can HR teams specifically reduce enterprise chat security risks?

HR teams play a direct role in reducing exposure. Practical steps include:

- Leading employee training on data classification and acceptable AI use

- Enforcing role-based access policies for chat tools

- Auditing with IT which AI tools can access HR data systems

- Consolidating communications onto governed platforms to prevent unsanctioned tool adoption

Does enterprise chat software need to be HIPAA or GDPR compliant?

Yes, if the platform communicates about patient health information (HIPAA) or processes EU resident data (GDPR), compliance is legally required. Organizations should request a Business Associate Agreement (BAA) from vendors and verify data residency and encryption standards before deployment.